PEBBLE ACADEMY · Cloud & AI

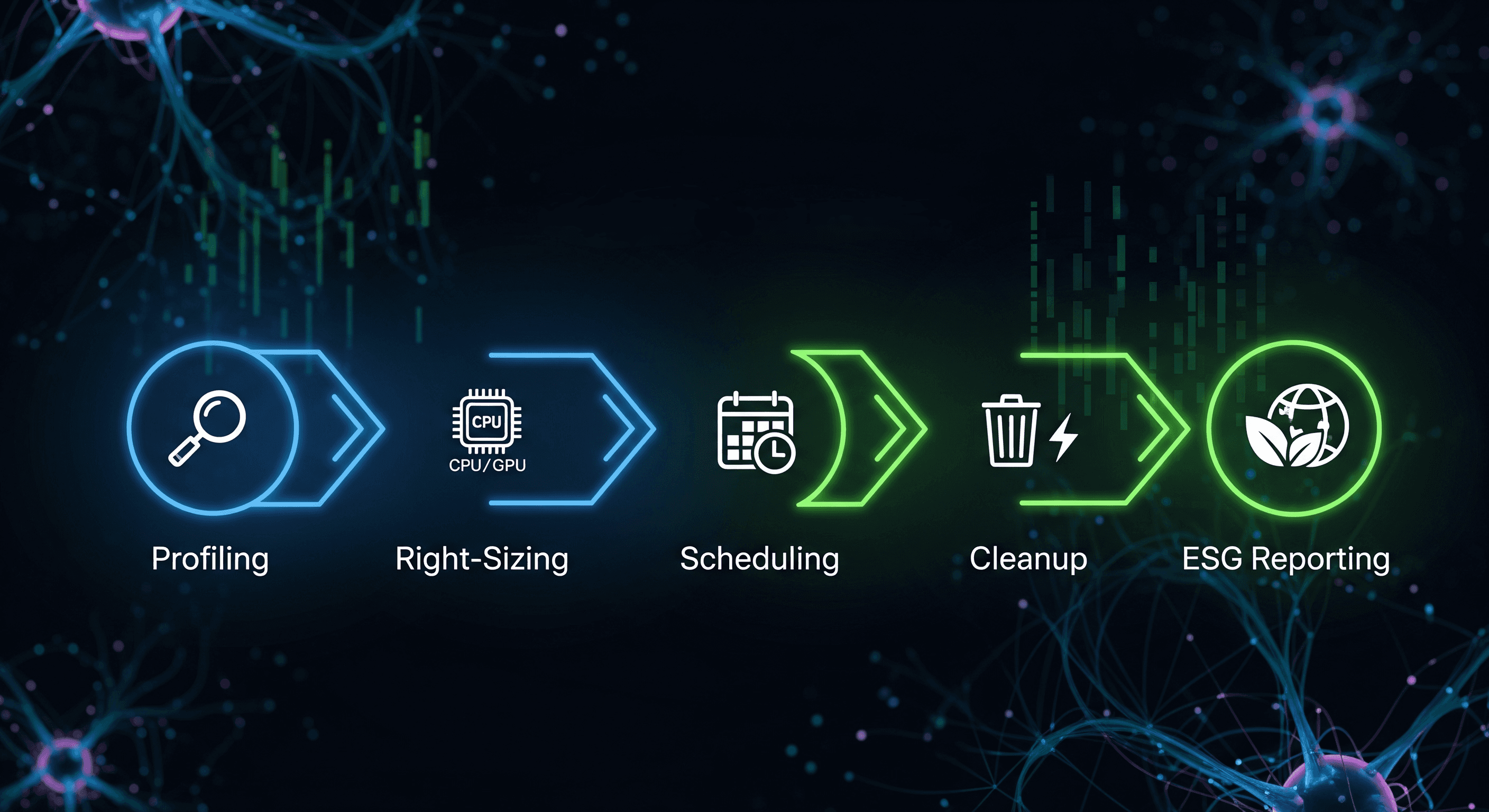

1. Profile and Tag Every AI Job by Project and Team

You can't optimize what you can't attribute. Start by tagging every cluster, namespace, and job with a project, team, and use-case label. The goal isn't compliance — it's making per-team and per-model spend visible to the people who can actually do something about it.

Tip: use project tags and labels to break down usage by team or use case. Then make those breakdowns the default view of your cost dashboard.

2. Benchmark and Right-Size All Compute Resources

Most AI workloads run on instance types chosen for the worst case, then never revisited. A monthly benchmarking pass against your real traffic almost always finds a smaller GPU, a different memory tier, or a regional alternative that lands the same quality at lower cost.

Pro tip: re-benchmark periodically. Cloud hardware and pricing evolve rapidly.

3. Routinely Detect and Eliminate Idle Resources

The single largest waste category in most AI stacks is GPUs that are reserved but not actively training or serving. Build a sweeper that tracks utilization on each accelerator and flags anything below your floor for the past N hours. Pair it with a deletion policy for orphaned volumes and stale checkpoints.

Common pitfall: forgetting to delete temporary storage after training can quietly add five figures a month.

4. Batch and Schedule Jobs for Cost and Sustainability

Spot capacity, cheaper regions, and lower-carbon hours are all available — if your scheduler can wait. Group flexible jobs (offline training, evals, batch inference) and run them when conditions are favorable. Reserve always-on clusters for traffic that genuinely can't move.

Pro tip: batch low-priority jobs to maximize utilization and minimize idle time.

5. Integrate ESG Metrics into All Reporting

Track energy and carbon alongside cost on every ML run. Surface the numbers in the same dashboards your platform team already uses for budget and reliability. When sustainability is a footer in a separate quarterly slide, it gets ignored. When it sits next to spend per training run, it gets optimized.

Checklist: add energy and carbon tracking to every ML run, and visualize the results where engineers will actually see them.

Ready to Automate Every Step?

Pebble automates the entire checklist — tagging, benchmarking, idle detection, smart scheduling, and ESG reporting — out of the box. Request a demo to see it run against your stack.